Come Behind Me, So Good!

- over 5 years ago

- 106 VŪZ

3

- 4

- Report

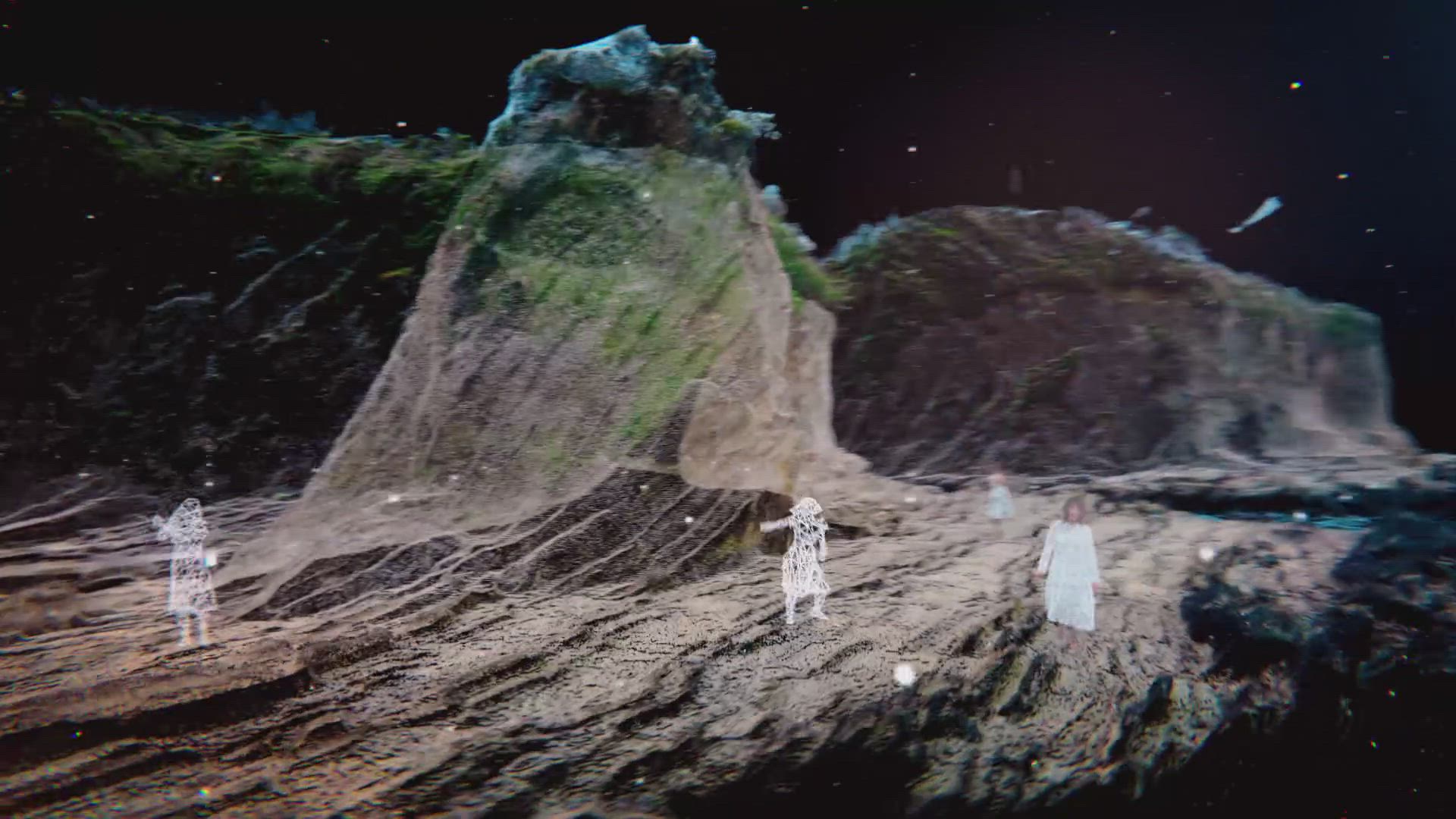

Computer animation music video directed by Daito Manabe Music and vocals by Kazu Makino Gear Used: DJI Inspire 2 X7 cinemaDNG RAW DJI M600 Pro Insta 360 Pro Drone One Cut Auto Pilot Laser Scan Point Cloud 4D Views ━━ theme ━━━━━━━━━━━━━━━━━━━━ One of the challenges was to integrate individual technology and technique that have been accessible over the past few years to achieve video with seamless transition between the real and virtual worlds. The music's characteristic canon style singing structure is expressed through a characteristic dance sequence in a beautiful location full of nature, where each dancer's motion shifts little by little. Utilization of free view point of drone and data of landform and whole dance sequence enables more flexible 3D expression by merging camera sequence created on 3D software with drone’s actual position and camera angles attainable through drone’s flight log analysis. The dance sequence data recorded with 4D Views can be freely arranged on the actual scene data of a one-cut footage shot with a drone. It seamlessly goes back and forth between the real-world and the virtual world consisting of point-cloud made with a laser scanner with a free viewpoint. ━━ the inspiration for the project ━━━━━━━━━━━━━━━━━━ Our inspiration was to augment free viewpoint expression inside the virtual world with grid spaces, which Rhizomatiks has been working on, into “the cape” and “the waterfalls”, which actually exist in the great nature of the real world. ━━ something interesting that happened while filming ━━━━━━━━━━━━━ At first, we tried to convert the flight log data of the drone to the camera trajectory (spline data) of cinema 4D, but the drone used (DJI Inspire2) was not compatible with RTK, and it turned out that GPS was inaccurate. In addition, GPS itself didn’t work well deep in the forest. Location data from the log data is not attainable, or even if it could, it was rough data. It was not useful for production and we had to give up. ━━ a detailed description ━━━━━━━━━━━━━━━━━━━━ The first question that we came across while considering the feasibility of the idea we wanted to realize in this music video was if it is possible to convert the flight path data of a drone into camera sequence data on CG to reuse it? The first thing we tried to verify was to take out the flight log data of the drone and convert it to the camera trajectory (spline data) of Cinema 4D. Because the drone (DJI Inspire2) used is a model that is incompatible with RTK, GPS is not accurate enough. In addition, GPS itself didn’t work well deep in the forest.Location data from the log data is not attainable, or even if it could, it was rough data. It was not useful for production and we had to give up. In the course of further validation, we came up with the idea of utilizing the drone's automated navigation app, and taking shots of the same location multiple times at different times for a seamless transition that goes back and forth between landscapes at different times of day. Furthermore, it came to our mind as a feasible solution to start from the sequence of the real shot scene, shift into the same position of CG data shot with a laser scanner, move around in the world of u can freely set which subject you want the drone to turn to. Once programmed, it can trace elegant and delicate sequences over and over again with perfect accuracy, which would never be possible with manual operation. The programmed flight path data is very convenient because it can be reused with various drones, as long as they are compatible with Litchi. The first step is to use the Mavic2 Pro, the best location-hunting drone, to fly the camera manually according to the camera route data transcribed by the director, and to get the sense of the best shots. If point-cloud, and finally come back to the real shot scene. DJI drones’ flight paths can be programmed with autopilot apps such as GSP and Litchi. For Litchi, not only the trajectory, but also the altitude, flight speed, and camera angles can be set individually, and yowe verify the flight route at this stage, the drone can fly the wrong path and can be lost unintentionally. After confirming that there is no conflict between the actual flight trajectory log and the map data of the automated navigation app, we register the flight path into Litchi (autorotation software) to actually fly the drone and verify the camera angle in the actual flight. Once we programmed the perfect sequence by the fine-tuning camera's trajectory sequence, altitude, angles, turning points, and etc, we switched to another drone (Inspire2 X7 RAW recorder) installed with that programmed data and started the actual shooting. We do multiple shoots at different times and on different days. Although the drone’s position is not exactly the same considering the wind speed at the time of the flight, the conditions of GPS (accuracy varies depending on the conditions) and those errors arising from above, this method allows you to capture the same scene in high-accuracy with a different impression. You can get a more dynamic result if you shoot the same scene in different times over seasons, or even over several years. Credits: Direction - Daito Manabe (Rhizomatiks)+Kenichiro Shimizu (PELE) 4D Views - Crescent, inc.t 3D Scan - Katsuya Tsukui,Yusuke Hayashi (Picture Element) Drone Pilot - Yuhki Endoh (HEXaMedia Inc.)

Up Next

Paradise on earth - Tulum, QROO, Mexico

- AurelBaud

- 2.2k VŪZ

29 - 22

- about 9 years ago

Memories of 2023

- Feeling Drone - Gu...

- 2.2k VŪZ

5 - 7

- almost 2 years ago

Behind The FPV Goggles: Freybott

- Behind The Goggles

- 941 VŪZ

22 - 14

- over 9 years ago

Behind the Goggles: The Steve

- Behind The Goggles

- 2.6k VŪZ

27 - 12

- almost 9 years ago

Behind The FPV Goggles: MartyFlyzZzFPV

- Behind The Goggles

- 874 VŪZ

28 - 16

- over 9 years ago

How The Walking Dead will End - KWAD Version!

- JibberFPV

- 1.3k VŪZ

20 - 13

- almost 9 years ago

COME WITH ME - Georgian National Parks

- Travel With Us

- 1.9k VŪZ

10 - 19

- about 7 years ago

Best of SE FPV │ My Year 2018 - SE FPV

- SE_FPV

- 700 VŪZ

6 - 3

- almost 7 years ago

Behind The FPV Goggles: nurkfpv

- Behind The Goggles

- 5.7k VŪZ

39 - 24

- over 7 years ago

Hamburgers to hurricanes the truth behind global warming

- Douglas Thron

- 859 VŪZ

21 - 9

- about 8 years ago